It is not always easy to know how to organize your data in the Crushmap, especially when trying to distribute the data geographically while separating different types of discs, eg SATA, SAS and SSD. Let's see what we can imagine as Crushmap hierarchy.

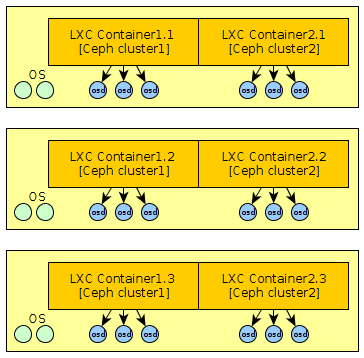

Take a simple example of a distribution …