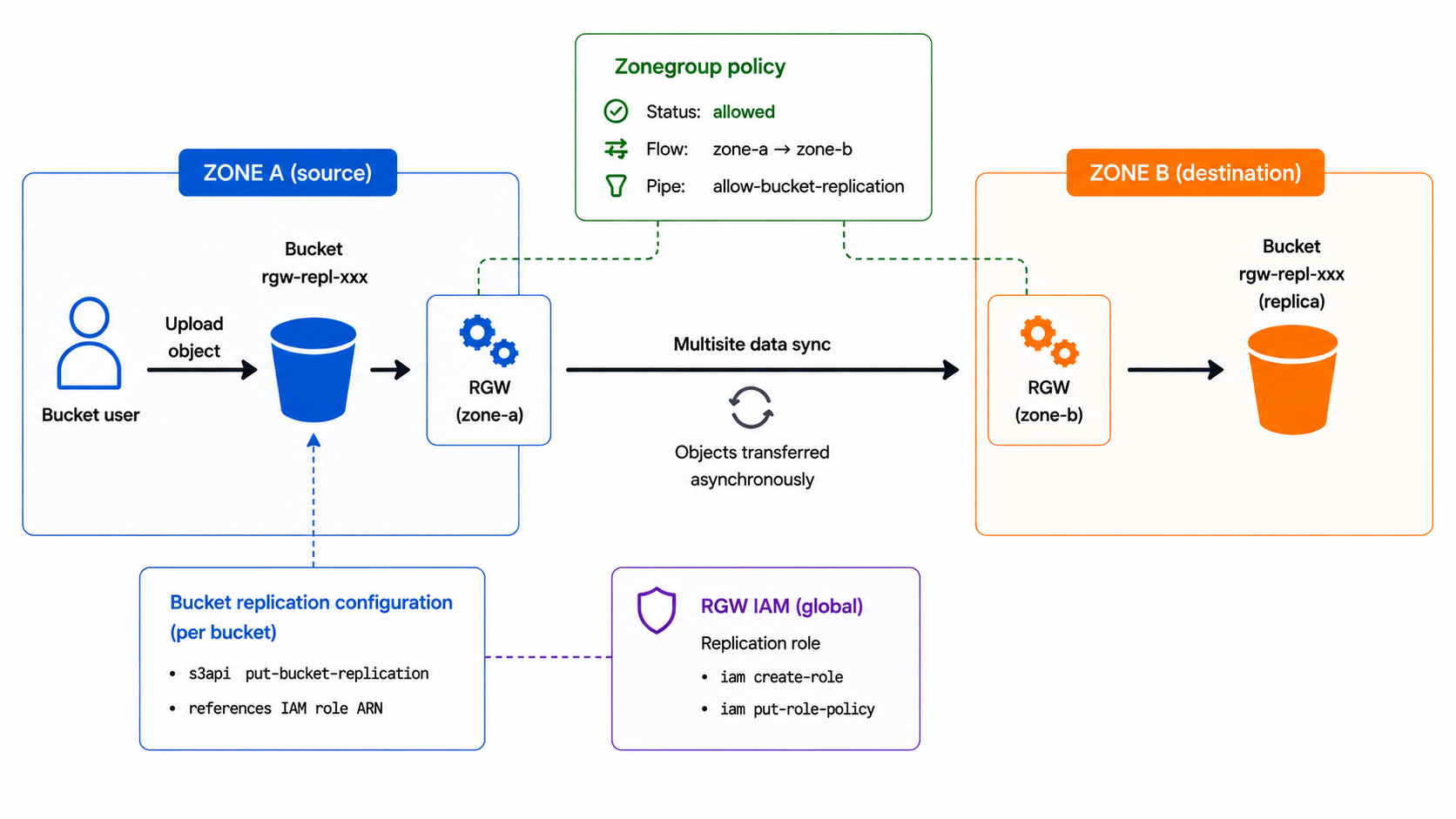

Manage Ceph RGW bucket-level replication using the AWS CLI.

Internal RADOS Object Names Used by RadosGW

The naming of RADOS objects in RGW can sometimes be confusing. The following notes summarize the basic principles used to identify them:

The fundamental cases

| Case | Naming Format |

|---|---|

| In all cases | <bucket-id>_<object-name> (head) |

| Stripes on Large object | <bucket-id>__shadow_<prefix>_<stripe-id> |

| Multipart upload | <bucket-id>__multipart_<object-name>.<upload-id … |

Ceph Versions: Open Source / RHCS / IBM

| Release Date | Version (Community) | Red Hat Ceph Storage | IBM Storage Ceph |

|---|---|---|---|

| Upcoming | Ceph 21.X "Umbrella" | ||

| 2025-11-18 | Ceph 20.2 "Tentacle" | RHCS 9 | IBM Storage Ceph 9 |

| 2024-09-26 | Ceph 19.2 "Squid" | RHCS 8 | IBM Storage Ceph 8 |

| 2023-08-07 | Ceph 18.2 "Reef" | RHCS 7 | IBM Storage Ceph 7 |

| 2022-04-19 … |

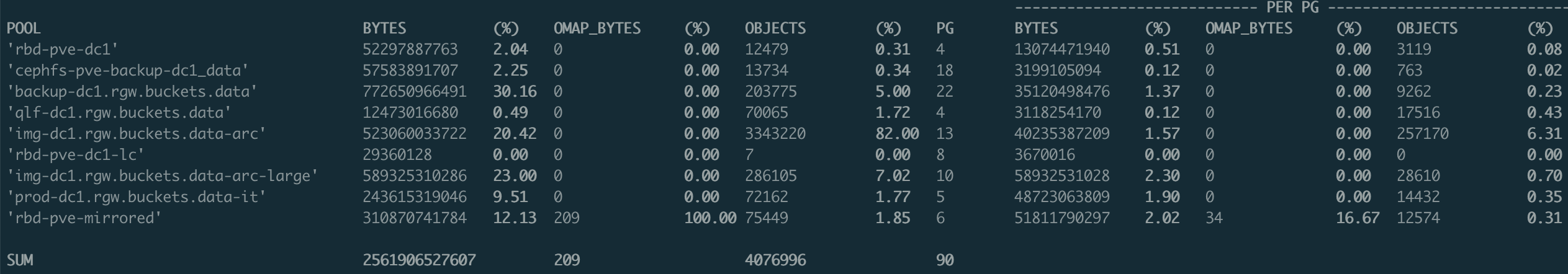

OSD Statistics per pool on Ceph

A simple awk command for analyzing OSD usage in Ceph.

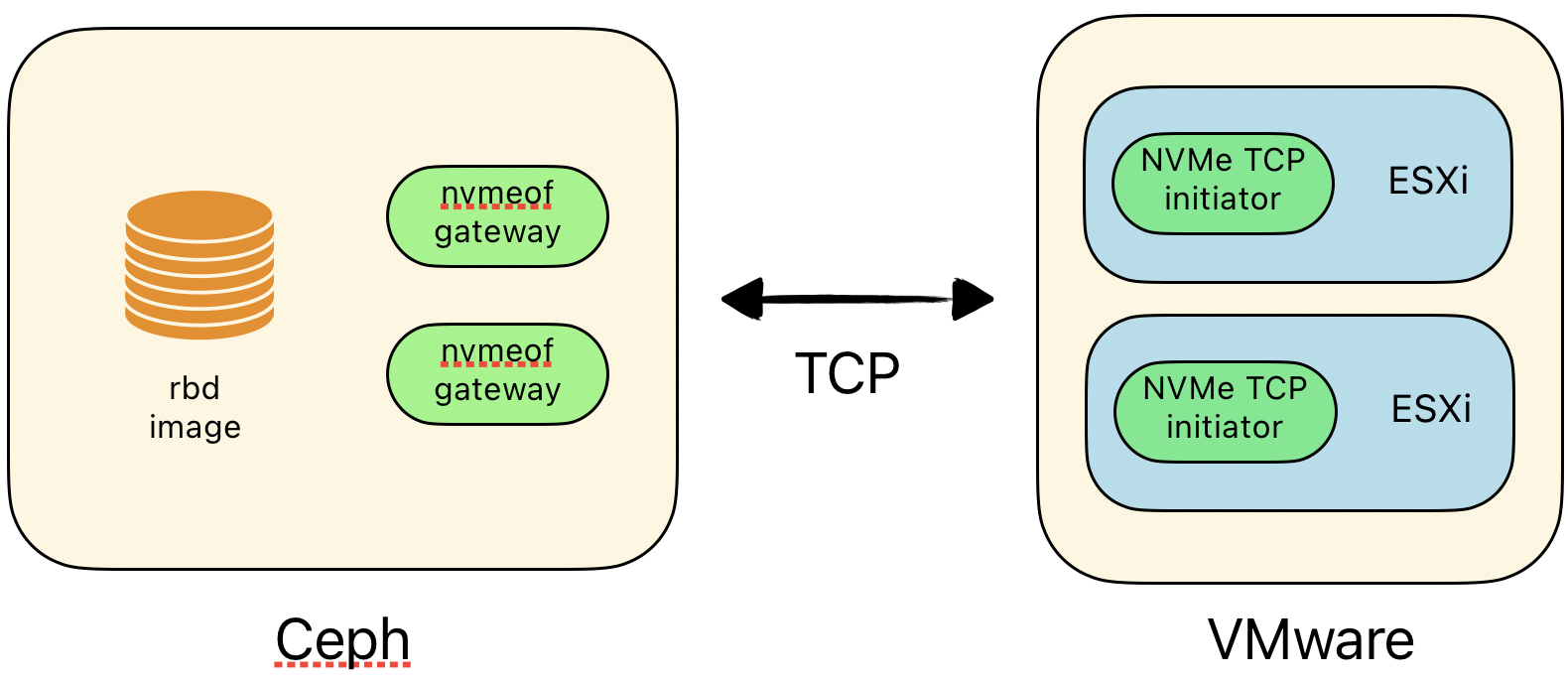

Test Ceph NVMe-oF

A quick test of NVMe over Fabrics (NVMe/TCP) and VMware...